AI Has a Memory Problem. Decentralization and Privacy Might Have a Solution. Part 3

In the first part of this 3-part series, I covered AI memory and its classification, while in the second part, I discussed in detail the types of AI memory and security risks associated with the architectures.

Here, I will refer to Oasis technology for a potential solution to AI memory pain points through the decentralization and privacy approach. I will also talk about some working use cases for portable AI memory.

There are two distinct pain points of the AI memory architectures: security threats and data silos and redundancy.

As a believer in decentralized AI (DeAI), I think the solution to attack risks is best addressed by security-first architectures that adopt a Zero-Trust model.

How does this work? Basically, all data is monitored at the ingestion stage, and any sensitive information is identified and redacted before it moves to the vector storage phase. Role-Based Access Control (RBAC) and Attribute-Based Access Control (ABAC) are enforced at the retrieval layer. Result: the system only considers document subsets that the specific user is authorized to see.

Now, what about the other pain point — information silos and redundant work? Simple answer: it is preventable. Let’s understand how, with reference to the multi-agent collaboration we discussed regarding stateful memory loops.

DeAI ensures a shared workspace allowing different agents (for example, an Architecture Agent and an Implementation Agent) to work in tandem, querying and updating the same memory instance. This is also the seed for AI context flow, where data silos do not result in context loss.

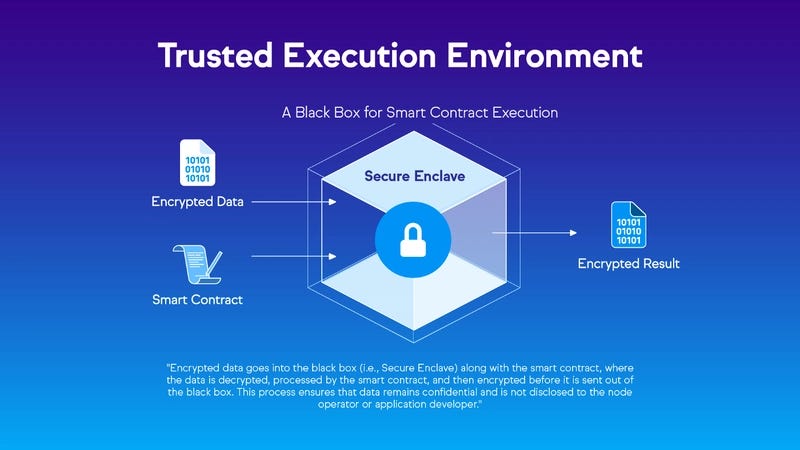

A serious bottleneck in ensuring secure AI memory is protecting the data being computed. Traditional encryption can handle data at rest and in transit. But what about the information that is decrypted during the actual retrieval and inference processes?

Hardware-Backed Privacy via TEEs

Confidential Computing is the answer to this security gap, utilizing hardware-backed Trusted Execution Environments (TEEs). The secure enclaves isolate memory as the content is made cryptographically inaccessible to any unauthorized access, even by the infrastructure operators. For the AI systems, the prompts and embeddings are only decrypted inside the enclave’s black box. As a result, the vector searches and inference building can occur smoothly without exposing any raw data.

The reason to use Oasis technology as a reference to a potential solution for secure AI memory is how it combines and optimizes on-chain and off-chain components — the first production-ready confidential Ethereum Virtual Machine (EVM), Sapphire, and the Runtime Off-chain Logic (ROFL) framework. By utilizing hardware isolation (Intel SGX/TDX), it is ensured that transaction inputs, return values, or the internal state of the smart contract remain confidential at all times.

For complex AI memory systems requiring computation-heavy processing, Oasis utilizes the ROFL framework instead of wholesale on-chain logic. So, model training and inference can be done securely off-chain while verifiable settlement can happen on-chain. This combination is the foundation for trustless AI agents that can manage private keys and sensitive user context within a secure enclave. The personal information and sensitive data consequently become a portable, secure asset.

Industry-Specific Implementations of AI MemoryWhat AI memory architectures need vary across regulated industries needing dynamic blueprints for solutions. Let’s talk about healthcare and finance as the two most highly impacted areas.

Healthcare

Here, AI systems must adhere to strict standards like the Health Insurance Portability and Accountability Act (HIPAA) and Health Information Trust Alliance (HITRUST) to protect electronic Protected Health Information (ePHI). Such a memory architecture must include:

- Zero-Retention Architectures: Data is isolated and cannot be reused for any other training purpose. A volatile memory approach is ideal where data is processed in-memory and immediately discarded.

- FHIR-First Data Foundations: The Fast Healthcare Interoperability Resources (FHIR) standard helps create a standardized operational data layer. This eliminates schema chaos and enables AI assistants to retrieve clinical facts using a shared, consistent language.

- Auditability and Explainability: Verifiable audit trails for agentic workflows ensure that we know which medical databases were accessed and why. This is critical for regulatory compliance.

Finance

Here, there needs to be a fine balance between collaborative analytics and exposure to private and proprietary data through transparency.

- Secure Multi-Party Computation (SMPC): An example would be where multiple banks are analysing transaction data for fraud detection, but no single institution can access another’s private customer records.

- Homomorphic Encryption: Financial institutions use this to perform computations directly on encrypted data, ensuring that sensitive financial parameters are never exposed during processing.

Working Use Cases

There are already a few companies that are working on solutions for AI memory problems and tackling how to implement portable memory systems.

- Plurality is developing the Open Context Layer. It allows users to autonomously store their data and chats in specific memory buckets for better context management, and also for sharing.

- MemSync is building the Unified Memory Layer. It allows users to create a persistent “digital twin” based on personalized conversation and knowledge. This enables the AI system to know the user, track their evolving ideas, and serve as a private sounding board for reflection and decision-making.

- Ekai is offering a developer-focused solution. It allows users to switch among various AI models via smart model routing without context loss.

The future of AI memory? A portable context model. This means that you, as a user, are not obligated to commit to a single model or AI assistant, or have your digital history trapped in large platforms like OpenAI or Anthropic. When you move and switch as you need and choose, the memory travels with you, encrypted and under control, working like a neutral, interoperable memory layer.

Realizing this vision requires a fundamental reimagining of memory as infrastructure. We can combine stateful memory loops, DeAI, and confidential computing technologies like Oasis ROFL and Sapphire to build AI systems that are smarter and personalized while also being fundamentally secure and user-owned. Result: Private, portable AI memory that is a sovereign asset, free from data privacy liability.

Resources referred:

Originally published at https://dev.to on February 23, 2026.

AI Has a Memory Problem. Decentralization and Privacy Might Have a Solution. Part 3 was originally published in Coinmonks on Medium, where people are continuing the conversation by highlighting and responding to this story.